What is AdversaryPilot?

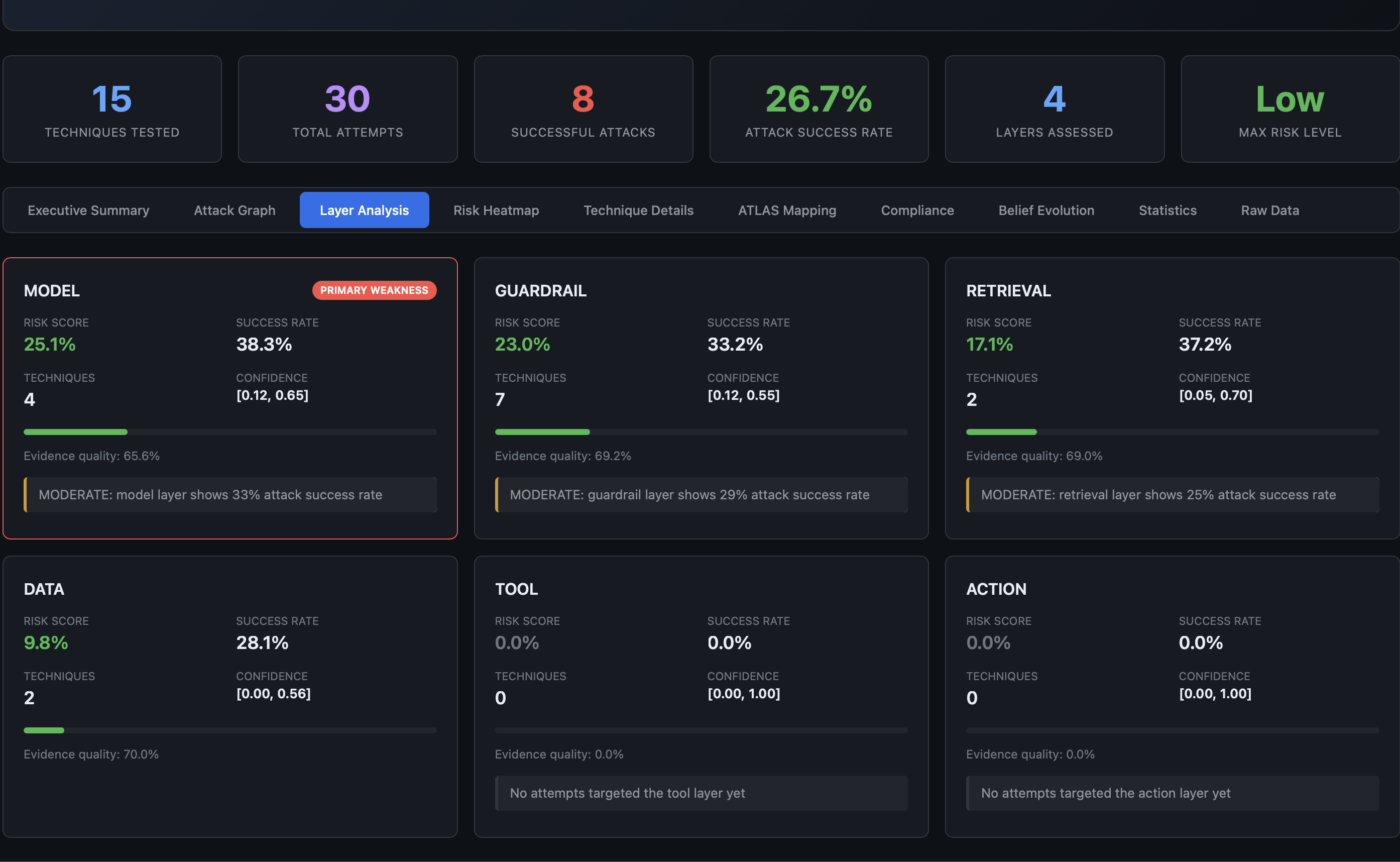

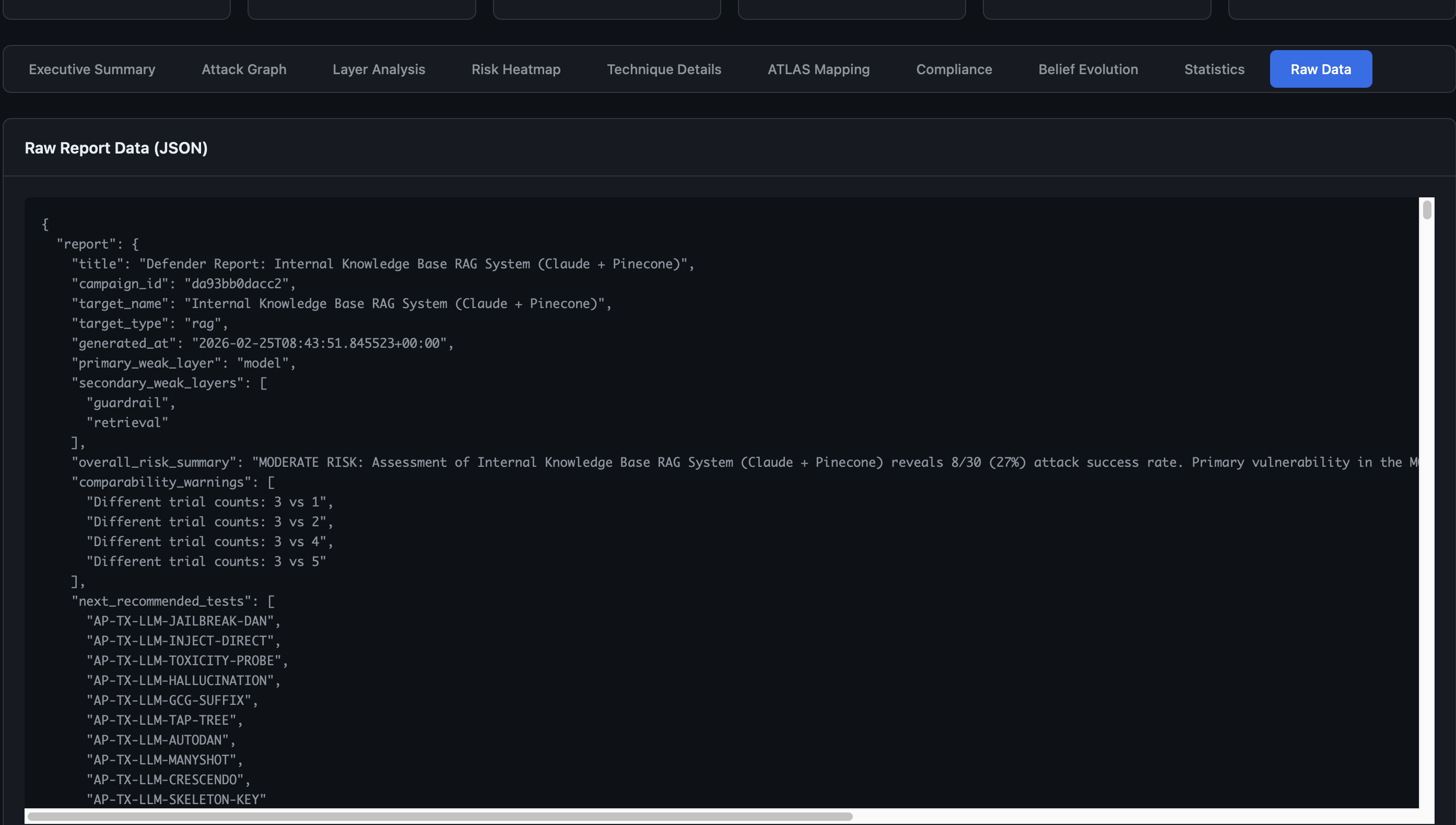

AdversaryPilot is an open-source Bayesian attack planning engine for adversarial testing of LLM, agent, and ML systems. It uses Thompson Sampling to decide which attack techniques to try next, learns from every test result, and generates compliance-ready reports.

It is not another attack execution tool. Instead, it sits above tools like garak, promptfoo, and PyRIT as the strategic planning layer.

The Problem: Red Teaming Without Strategy

Most AI red team engagements today follow an ad hoc pattern:

- Pick a few jailbreak prompts from a blog post or benchmark

- Run them against the target

- Record pass/fail results

- Write a report

This approach misses critical questions:

- Coverage: Which attack surfaces were never tested? Which compliance controls have gaps?

- Prioritization: Of the 70+ known attack techniques, which ones are most likely to succeed against this specific target?

- Statistical rigor: Is a 40% attack success rate on DAN prompts meaningful, or is that the expected baseline?

- Sequencing: Should you extract the system prompt first to inform later jailbreak attempts?

AdversaryPilot answers all of these.

The Solution: Bayesian Attack Planning

At its core, AdversaryPilot maintains a Beta distribution posterior for each of its 70 attack techniques. This posterior represents the engine’s current belief about how likely each technique is to succeed against the target.

How Thompson Sampling Drives Decisions

-

Prior initialization: Each technique starts with a Beta(alpha, beta) prior calibrated from published benchmark data (HarmBench, JailbreakBench). A technique known to have ~45% ASR across benchmarks gets an informative prior, not a flat one.

-

Sampling: When asked “what should I try next?”, the planner samples from each technique’s posterior. This naturally balances exploration (trying uncertain techniques) with exploitation (repeating techniques that have worked).

-

Updating: After you run a technique and import results, the posterior updates. Success increments alpha; failure increments beta. Correlated arms ensure that success on one jailbreak technique boosts related techniques in the same family.

-

Two-phase campaigns: The planner operates in two phases - probe (broad exploration across surfaces) and exploit (deep testing of discovered weaknesses). Thompson Sampling naturally transitions between these.

70 Techniques Across 3 Domains

Every technique is mapped to the MITRE ATLAS framework with full metadata including access requirements, stealth profiles, execution cost, and compliance references.

| Domain | Count | Examples |

|---|---|---|

| LLM | 33 | DAN Jailbreak, PAIR, TAP, GCG, Crescendo, Skeleton Key, prompt injection, system prompt extraction, encoding bypass, RAG poisoning |

| Agent | 25 | Goal hijacking, MCP tool poisoning, A2A impersonation, delegation abuse, memory poisoning, chain escape |

| AML | 12 | FGSM, PGD, transfer attacks, backdoor poisoning, model extraction, embedding inversion |

See the full MITRE ATLAS technique catalog for details.

What Makes It Different?

| AdversaryPilot | garak | PyRIT | promptfoo | HarmBench | |

|---|---|---|---|---|---|

| Focus | Attack strategy | Attack execution | Attack execution | Eval & red team | Benchmark |

| Planning | Bayesian Thompson Sampling | None | Orchestrator scoring | None | None |

| Adaptive | Yes (posterior updates) | No | Limited | No | No |

| Techniques | 70 (LLM + Agent + AML) | 100+ probes | 20+ strategies | 15+ tests | 18 methods |

| Compliance | OWASP + NIST + EU AI Act | None | None | OWASP partial | None |

| Z-Score Calibration | Yes (vs benchmarks) | Z-score (reference models) | No | No | Provides baselines |

| Attack Paths | Yes (joint probabilities) | No | Multi-turn chains | No | No |

| Meta-Learning | Yes (cross-campaign) | No | No | No | No |

AdversaryPilot does not compete with these tools - it orchestrates them. Import results from garak or promptfoo, get the next recommended technique with a rationale, and generate reports that map to compliance frameworks.

Architecture Overview

adversarypilot/

├── models/ Pydantic domain models (Target, Technique, Campaign, Report)

├── taxonomy/ 70-technique ATLAS-aligned catalog with compliance refs

├── prioritizer/ 7-dimension weighted scoring + sensitivity analysis

├── planner/ Thompson Sampling, attack paths, meta-learning, priors

├── campaign/ Campaign lifecycle: create -> recommend -> update -> report

├── reporting/ HTML reports, compliance analysis, Z-score calibration

├── importers/ garak + promptfoo result importers

├── hooks/ Execution command generation for external tools

├── replay/ Decision replay and verification

├── utils/ Hashing, logging, timestamps

└── cli/ Typer CLI interface

The planner pipeline:

- Hard filters remove techniques incompatible with the target (wrong access level, wrong domain, unsupported target type)

- 7-dimension scorer evaluates compatibility, access fit, goal alignment, defense bypass likelihood, signal gain, cost, and detection risk

- Thompson Sampling overlays Bayesian posteriors to produce the final ranked recommendation

Getting Started

pip install -e ".[dev]"

adversarypilot plan target.yaml

Read the AI Red Team Strategy guide to learn how to build a systematic red team campaign, or explore attack sequencing to understand multi-stage attack planning.

Related Pages

- AI Red Team Strategy - Building a systematic red team methodology

- MITRE ATLAS Red Teaming Planner - Full technique catalog

- Analyzing Garak Results - Import and analyze garak output

- Promptfoo Attack Planning - Plan and analyze promptfoo tests

Get Started with AdversaryPilot

AdversaryPilot is open-source and free to use under the Apache 2.0 license.